When Count Vectorizer is better than TF-IDF?!

- lucreceshin

- Jan 9, 2021

- 3 min read

Too Many Options in Machine Learning

In machine learning, there are so many techniques and terminologies that it can be hard to grasp the essence of every single one. More papers are on their way (especially in deep learning), meaning more advanced techniques are getting introduced with astonishing creativity and intimidating formulas. However, when it comes down to solving a real problem, there are no simpler or more complex techniques. Instead, there are techniques that fit the characteristics of the data better or worse. It would be great if we have expertise on the data so we know exactly which technique is the best. But since this is not usually the case, it is important to try out different techniques, see which one works better or worse, and obtain intuitions about the data. This top down approach is a crucial part of the trial and error process we go through when solving a machine learning problem.

Count Vectorizer vs. TF-IDF

One interesting case I found that a simpler technique performed better than a widely used, more advanced one was regarding text encoding. For text data consisting of different sentences, count vectorizer is a simple encoder that transforms each sentence into a vector (of length = # of unique words in all sentences) where each element is the number of times a word appears in the sentence. (e.g {"this is home", "home is home", "this is cat"} ----> { [1,1,1,0], [0,1,2,0], [1,1,0,1] } for words ["this","is","home","cat"].) TF-IDF (term frequency -- inverse document frequency) takes a more advanced approach that considers not only how many times a word appears in a sentence, but how many times a word appears across a set of sentences. This way, if a word appears in a lot of sentences, it can be considered less important and possibly a stop word. For the same text above, tf-idf will produce: { [.4,0,.4,0], [.8,0,.8,0], [.4,0,0,1] } for words ["this","is","home","cat"], with this formula. It encodes the word "is" as 0 since it appears once in all 3 sentences, efficiently treating it as a stop word.

Cuisine Dataset

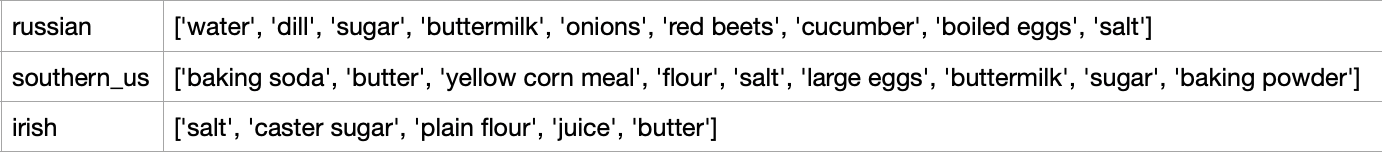

The text data I was using was from Kaggle's What's Cooking?- Kernels Only competition, where the task was to predict a dish's cuisine given its list of ingredients. There were 20 different cuisines to predict, and the dataset looked like this:

In this dataset, it can be seen that some ingredients are very common, such as salt and sugar, which act similar to stop words. More distinctive ingredients unique to a cuisine should be the important features that help distinguish between different cuisines. With this in mind, I tried both count vectorizer and tf-idf encoding methods for text pre-processing for the ingredients list.

Result with Different Text Encodings

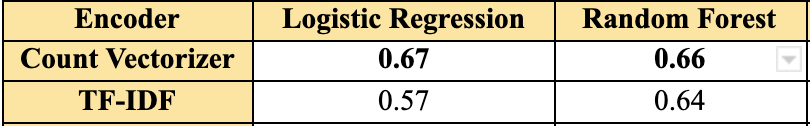

The resulting performance with logistic regression and random forest model looked like this :

Decimals shown in the table are validation F1-scores of each model using the corresponding text encoder. I won't go into details about each model specifically, but wanted to show how count vectorizer performed better than tf-idf encoder for both logistic regression and random forest model. This was counter-intuitive at first, since tf-idf should weigh down common ingredients and focus more on distinctive ingredients. So I looked at the data more closely.

"Soy Sauce"

If you see "soy sauce" as an ingredient of a dish, most will agree that it is much more likely that it is an East or Southeast Asian cuisine than European. This is natural for us humans since we have decades of experience in tasting different cuisines. But for a machine learning model that only knows about the data I provided, this is not intuitive. The following table shows the number of occurrences of the word "soy sauce" in each of the 20 cuisines in the dataset:

Although "soy sauce" is much more frequent in East/Southeast Asian and Southern US cuisines, it appears in ALL cuisines. This means that tf-idf encoding will transform "soy sauce" as 0 (with this formula), which is misleading as it is a unique ingredient to a subset of the cuisines. Count vectorizer, on the other hand, will count "soy sauce" in each dish as 1. This shows that count vectorizer can work better in a situation where some words are contained in the sentences of all classes, but are more unique to only a subset of classes. This is an interesting discovery that could not have been made if I decided to choose a single encoder from the beginning without comparing different ones.

Self-Reflection

In the beginning, I honestly thought that tf-idf would perform better than count vectorizer in any case, since it is a more widely used, more advanced technique. This had been a common misconception that a "fancier" technique would work better in a machine learning problem. To overcome this, I realized that performing countless experiments through trial and error is momentous, while staying curious about the data at hand and asking questions.

Comments